Update: After I initially wrote this article, YouTube included a new codec called AV1 (aka AV01)

AV1 is not to be confused with the old AVC1 I write about in the article.

AV1 offers better quality than VP09 – even at lower bit rates.

It doesn’t look like you can force YouTube to play your own videos with the AV1 video codec.

It appears that YouTube is still testing the codec on their chosen videos.

But you can force YouTube to play high-quality videos with the AV1 when possible by going to the playback performance page under your YouTube account and choosing “Always prefer AV1”.

Then you can go to the AV1 Beta playlist page and see videos compressed with the AV1 video format.

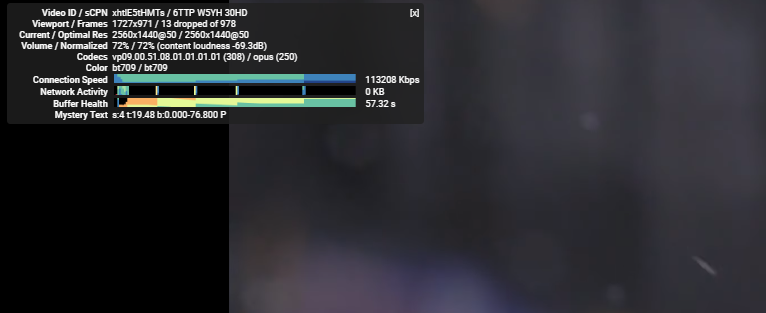

You can see if a video plays with AV1 by using “Stats for nerds,” as described under the “How to know which codec YouTube has used to compress your video” subheading further down in this article.

So you’ve just finished editing a video, and everything looks great when you watch it on your computer.

Then you upload it to YouTube, and the image quality looks horrible due to the compression YouTube applies to your footage. Sucks right?

In this article, I’ll guide you through some tips and tricks to ensure you maintain the quality of the video after you’ve published it to YouTube.

I also recommend you read the article on how to export high quality videos with low file sizes in Adobe Premiere.

It’s a simple step-by-step guide that covers all the best practices for exporting videos with the highest quality possible.

But first, let’s look at what can influence YouTube video quality…

Why is the image quality bad on YouTube?

No matter what codec you use when you export your video from your editing program of choice, YouTube will apply extra compression to your video content when you upload it to reduce the video file size.

That’s great for keeping the file sizes to a minimum on the small YouTube server (irony detection alert!) and ensuring video playback is possible for users with a poor internet connection speed.

But it sucks if you’re a YouTube content creator who has just spent weeks in the video editing cave creating a new video – because this data saver function decreases the image quality of your video.

When YouTube compresses your videos, it can cause digital artifacts.

You can experience anything from blockiness to banding, bad skin tones, and blurry YouTube videos.

Such artifacts are often seen in dark areas or shadows, large areas of a single color, in blurry shallow depth-of-field backgrounds.

Digital artifacts are also common in footage containing film grain, smoke, or other random particles.

The reason for this is why interframe compression is designed.

Interframe compression don’t like randomness in video footage

Interframe compression works by throwing away information (data), which appears similar across frames, e.g., a dark corner in a kitchen scene in your short film.

With minor changes (such as in shadows), the compression codec might interpret it as a single dark area and throw away much of the information in the shadows.

You’ll end up with a lot of digital blockiness in the dark areas of your frame.

YouTube compression can also have difficulty compressing footage with many randomnesses (such as in film grain).

The arbitrary nature of film grain, smoke, rain, snow, and other random visible particles will make every frame contain vastly different information from the last.

Randomness will give a codec a hard time. That doesn’t keep the algorithm from trying, resulting in your nice clean video ending up looking like digital mayhem.

But don’t take it from me. Watch this excellent video by Tom Scott, which shows what compression can do to footage with many random elements.

I find it interesting that randomness causes bad image quality on YouTube.

Because manually adding random noise is a well-known trick to battle banding and compression artifacts in still photos you upload to, e.g., Facebook and Instagram.

But if you add heavy film grain (which is random) to your video to get a more organic look or your footage is underexposed and noisy, then you’re setting yourself up for failure.

The worst thing you can do to test this is manually adding black bars plus film grain to your video.

That’s a big no-no! Have I done this when I first started? Oh yes… everyone wants that cinematic look, right?!

How to increase your image quality on YouTube

So what can you do about it?

When you upload a video to your YouTube channel, YouTube will reencode and recompress your footage. This will cause a loss of quality no matter what you do.

So it doesn’t matter what codec you use to export your video if you use a container that YouTube will accept.

And as long as you don’t use a setting (bitrate) that degrades the footage too much.

YouTube has two codecs it automatically chooses between – the avc1 and the vp9. The vp9 codec offers a much higher picture quality than the avc1.

How to know which codec YouTube has used to compress your video

But how do you know which codec YouTube has used to reencode your video?

To see what codec you have right-click on the gear cog where you would change the video resolution & select “stats for nerds”:

Force YouTube to always use VP9

I’ve read several attempts on how you can force YouTube always to use vp9 whenever you upload a video.

One of these steps includes adding 1% saturation to your video with YouTube’s video editor, which seemed to work for a while. But I’ve not found it to work any longer.

Another trick was to upload a single short video in 4K.

While it works for the high definition 4K video, I’ve found that it doesn’t automatically apply the vp9 to later videos with a standard definition like 1080p.

So how can you increase the quality of the YouTube video, then?

There seems to be a consensus among YouTube users online that if you run a big channel with millions of subscribers, vp9 will be the standard codec applied.

Since I don’t have such a channel, I have no way of testing whether this is true. If you do, please share your experience in the comment section below.

You have to take another approach if you’re not a big YouTube star.

If you browse to the YouTube section on Recommended upload encoding settings, you read this under the recommended video bitrates for lower resolution SDR uploads,

To view new 4K uploads in 4K, use a browser or device that supports VP9.

Source:

https://support.google.com/youtube/answer/1722171?hl=en

And below this statement are a couple of tables (one for SDR and one for HDR) with recommended bitrates.

I’ve taken the liberty to reproduce the most commonly used video sizes for SDR (as HDR videos aren’t that common yet) in the table below:

| Video Size | 2160p (4k) | 1440p (2k) | 1080p (FullHD) | 720p (HD) |

| Video Bitrate for 24, 25, and 30 fps | 35-45 Mbps | 16 Mbps | 8 Mbps | 5 Mbps |

| Video Bitrate for 48, 50, and 60 fps | 53-68 Mbps | 24 Mbps | 12 Mbps | 7.5 Mbps |

Now, this is interesting. It says that any 4K videos will be rendered with VP9.

So the first solution I’ve found is to export your 1080p Full HD videos as if they were 4K.

They may not look beautiful in 4K – or 2K, for that matter – but in FullHD, they will look normal. And because they were uploaded as 4K, YouTube will use the VP9 codec even when they’re played back in 1080p.

I then tried to upload a video in 1440p (2k) with a frame rate of 50fps – and lo and behold – it also used the vp9 codec:

It suggests that the high-resolution processing somehow triggers the default setting into being the VP9 codec. Nice!

But the same video in 1080p 50fps had the avc1 codec applied instead.

Also, I tried uploading a 1440p 25fps video with the recommended bit rate settings, and that video also was shown with the avc1 codec.

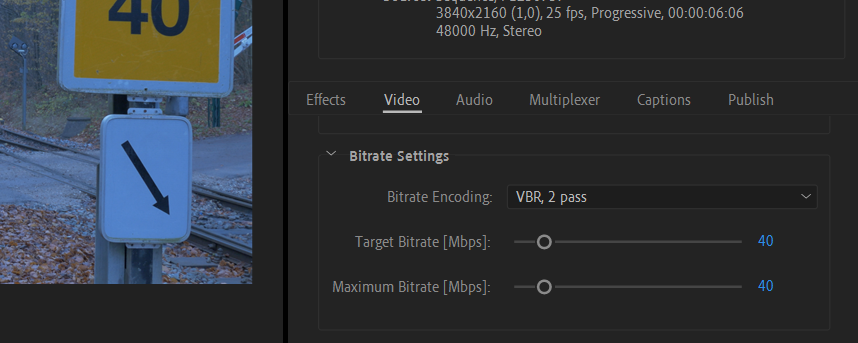

So I thought that maybe if I fiddled with the bitrate, I might be able to force YouTube to use VP9.

So I exported the video as FullHD but with the bitrate settings used for 4K:

But no luck! The bitrate settings alone don’t decide the codec applied by YouTube.

This suggests that the best resolution to trigger the default video quality to be VP9 is anything above 2K.

Conclusion

So what can we learn from this? Well, a couple of things:

YouTube doesn’t like randomness in video footage because of the interframe compression. Because of this, it is not wise to add grain to your film.

Adding noise to reduce banding is only advised for still photos – not video.

You should also always remember to expose correctly, so your footage isn’t underexposed and noisy. Check out my guide to shooting in the dark to reduce grain.

The vp9 codec offers better image quality than the avc1 codec.

If your channel is big enough, you might be lucky and have the vp9 codec on as default. I still need proof of this.

There seem to be two minimum factors needed to manually tricker the vp9 codec for your footage during the upload process:

- Upload your video in higher resolution 4k (2160 p video)

- Upload your video in high resolution 2k (50 fps). 48 fps might also work – I haven’t tested this.

In other words, the low-resolution video doesn’t seem to trigger the VP9 codec, so for the best viewing experience, upload in the highest possible resolution.

Did I miss anything? Do you have a better way? Or did you spot a fault in my approach? Please let me know in the comments.

Great piece of information. Tried it out and works the way you mentioned. Atleast until Youtube moves the yardstick for VP9 compliance, it should be good :-). Thanks for the effort.

Hi Hari.

I’m glad to hear you found it useful.

Best wishes and take care,

Jan

“The vp9 codec offers a much higher image quality than the av1”

This is completely false. AV1 is a much newer codec that offers much better quality than VP9 at equivalent bitrates. That is the entire reason YouTube added AV1 support in the first place. Where did you get this idea that AV1 is somehow worse?

Also, all videos YouTube encodes in AV1 also have a VP9 copy stored, for devices that are not powerful enough to decide them in AV1 (as AV1 takes more power from the client device to decode than VP9 does).

If you go on YouTube and go to Settings > Playback and performance, there is an “AV1 settings” option. The default is auto, and with this setting YouTube will show you AV1 (on videos that support it) at all resolutions up to the highest YouTube thinks your computer can handle. At resolutions higher than this YouTube will show you VP9. If you change this to “Prefer AV1 for SD”, it will show you AV1 (again, only on videos that support AV1) at 480p and lower, and all higher resolutions will be VP9. Setting it to “Always prefer AV1” will show you AV1 at all resolutions on all videos that support it, regardless of how powerful your computer is.

In the part of the article where you upload the same video in 4k, 1440p, and 1080p, and you see VP9 on the 4k and 1440p upload, but AV1 on the 1080p upload, they were probably all available in AV1, but your AV1 setting was on auto and YouTube decided that your PC is only powerful enough for AV1 at 1080p.

(Also quick aside here, in the article you refer to 1440p as 2k, but 2k is actually 1080p, not 1440p)

Hi CoasterKing

Thank you for your comment. I think you’re confusing AVC1 with AV1 (aka AV01). It’s true that AV1 offers better quality than VP09 (and with time probably even at lower bit rates as well). But the older AVC1 codec didn’t. That’s why I did these tests for this article.

Luckily, the older AVC (h.264) codec seems to have been phased out, so this article should probably be updated with a box explaining this at the top. I’ll make sure to do so asap.

Best, Jan

You are 100% correct, completely forgot h264 is sometimes referred to as avc1, I actually realized a few days later that I had made that mistake. Also I was pretty tired when I wrote that so that probably didn’t help. VP9 is definitely better than h264.

Also, I’m not sure h264 has been phased out on youtube, I still occasionally see new videos that are only available in h264.

You have a typo in the article. “The vp9 codec offers a much higher image quality than the av1.” I’m pretty sure you meant “AVC1.”

Hi.

Nice catch! You’re absolutely right. I’ve corrected the typo. Thanks for bringing this to my attention.

Best, Jan

Well, even we have a force vp9 option, we probably still need to force av01 to get better quality, or in the future,vp9 could be the default codec for small youtubers, and the big youtubers can have av01 codec!i hope we can actually force av1 in the future!

This topic is really getting on my nerves. What I discovered lately is very strange. I have a cartoon channel where I upload animations. One series is about cosmos so it has a lot of small particles in it (stars, comet tails etc.). And I always struggel to upload it in the best quality. It looks like AVC codec works MUCH better with this kind of material than VP09. What is more – one of my cosmic video is in AVC1 (looks good), other is in AV01 (looks good). But now I try to upload a new one and YT is constantly putting it in VP09 which looks horrible. What I discovered is that YT code the video by looking at the beginning. And in this new video I don’t have any stars at the beginning so the algorithm is basing its decision on those starting frames. The previous videos have stars from the beginning and so the codecs makes more sense. So now I need to find a way to trick YT to put the right codec :/

Hi Cezary

Yeah, I guess it’s just the nature of technology. The algorithms change all the time and all we can do is to adapt.

It does sound interesting though, that YouTube should base the algorithm on the content of a video from the first few seconds instead of the resolution and/or codec. This is news to me. Have you experienced this all the time, or is it since a recent algorithm change, do you think?

Best, Jan

So, the whole “big channel” theory automatically converts to vp9, is probably not true. I have seen channels with only 200 subs and they have vp9 codec. Could it be because they are Apple Macbook users? I use a PC and my videos still come out avc1. I have searched all over YT and still come across this problem.

Hi Harold.

Thank you for your comment.

After I initially wrote this article a lot of things have happened. First, YouTube has included a new codec called AV1 (aka AV01) – not to be confused with the old AVC1, I write about in the article. AV1 offers better quality than VP09 – even at lower bit rates.

This could be the reason why you’ve seen channels with 200 subs now having the vp9 codec as default – i.e. if you are sure it wasn’t a 4K video you watched on the channel?

Best, Jan

Thanks for the reply. I will be testing out my videos in the next few days and I will upload to see the difference. Thanks for this article.

With my own research I was able conclude that it’s not so much the “big channels” that get the VP09 codec but rather the video’s popularity that determines it’s codec.

If the video becomes more popular it will get the VP09 codec. So while having a big channel would definitely help, I don’t think it’s the actual reason.

My channel only has, as of now, 180 subscribers, out of the 17 videos I have, 3 have done ‘well’ in views (over 500) and all three have the VP09 codec while the rest (less than 500 views) have the AVC1 codec. One of those videos is from over 5 years ago too.

That makes a lot of sense. That’s probably also why the “big channels” have them because if you have an established audience you’ll get thousands of views in a couple of hours.

I always upload 1440p 30fps and YT always use VP09 codec for that. If I upload 1080p – it’s always AVC1 though. So, I guess there is a difference for YT in 1440p 25fps and 30fps. Cheers.

Hi Uncle with the long beard

I’m not sure, I understand your post. First, you talk about uploading in two different resolutions — and then you conclude that there is a difference in the codec based on the frame rate?

Could you please elaborate? Thanks for your reply.

Best, Jan

I think he’s saying that 1440p @30fps = VP09, 1440p @25fps = AVC1 & 1080p @30fps = AVC1 therefore to be dealt AVC1 the video needs to be lower than 1440p OR lower than 30fps.

Or perhaps more accurately AVC1 when resolution<X OR framerate<Y where 1080p<X<1440p and 25fps<Y<30fps (ie we don't know where 29.97fps or 1200p fit into that).

Nice article – thanks

After doing some experimenting myself, I discovered how to get vp09 codec on my streaming – not uploading completed videos but streams which are viewed later…

Go to studio.youtube.com

Go to “Create new stream key”

leave un-checked “Turn on manual settings”

I then stream from OBS at 1920×1080 30fps at 12mbs video and 192kbps audio

and the video gets encoded as vp09.

I hope this helps someone else.

Aaron

Hi Aaron.

Thank you for sharing the nice tip. Maybe it can help others as well, as you say 🙂

Best, Jan

Thanks for the helpful research and article.

The stats for nerds tip helped (I had to right-click on the video to get the menu), and it confirmed my 1080p, 30fps had the AVC1 codec applied.

Based on some other useful but less informative articles I’d found through Google, I’d already decided to export with “maximized” settings from my program, but this helps to validate upgrading the output because of the codec is the recommended current approach to get VP09 applied (fingers crossed).

Many thanks, it’s frustrating to see many hours of work “downgraded” by codecs and compression at the public end of the workflow.

Good stuff, hoping it works, but suspecting it will.

Cheers.

Hi.

I hope it worked? May the force be with you – always!

Best, Jan

🙂

It just has to be 1440p or better, then you get VP9. Framerate doesn’t matter, source bitrate doesn’t matter, channel subs don’t matter.

I think it’s not worth the effort trying to trick YouTube into VP9. They can change their software any time and re-encode your video to AVC or whatever they want.

My workaround is to upload CineForm video in a MOV container with 24bit stereo PCM audio. Now this yields quite some huge files, but YouTube will store this file somewhere in its servers and re-encode from it whenever they change the delivery format.

Not a solution for streams, of course and unfortunately.

Adding to my previous comment: you can also switch YT to prefer VP9 playback indirectly here: https://www.youtube.com/account_playback – note that this is a setting in the viewer’s account, not in your account when uploading.

On my Thinkpad t470 laptop, VP9 is only partially supported in the GPU decoding and I guess this is why all browsers get the AVC1 stream from YouTube, no matter which setting I use.

Hi Niels.

Thanks for the tips man. I think it will help a lot of readers. Really appreciated! 🙂

Best, Jan

Hi anyone having a working solution to this problem? All videos on my channel are also affected by this downgrading codec that yt use 🙁 one thing is notice is when i tried to play the video using my macbook laptop, they video looks smooth. But when viewed on mobile phones and ipad they look pixelated (moving object part).

I’ve checkee video that gets millions view, they are still using avc1 codec. So it’s not a matter of how popular your video is